Compute as AI's Primary Bottleneck: Why Energy, Chips, and Infrastructure Now Outrank Talent in the Global AI Race

Epoch AI data across three frontier labs shows compute dominating 57-70% of costs, eclipsing talent. This reveals AI's new chokepoints in energy and semiconductors, driving investment toward infrastructure, nuclear power, and chip fabrication while reshaping geopolitical competition.

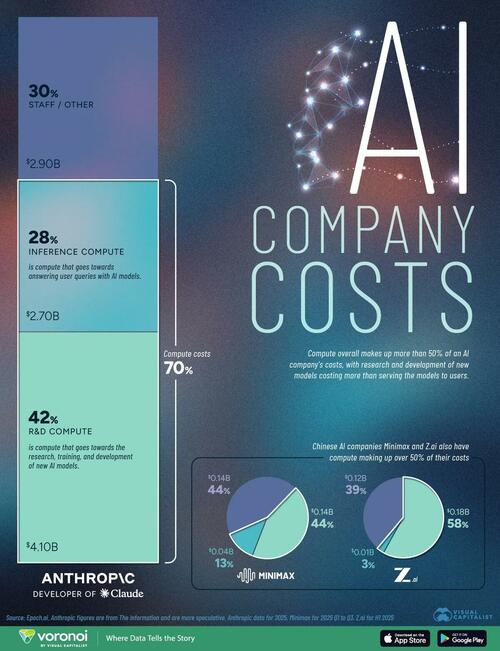

Epoch AI's cost breakdowns for Anthropic, Minimax, and Z.ai, as detailed in the ZeroHedge report drawing on The Information disclosures and IPO filings from January 2026, show compute (R&D training plus inference) comprising 57-70% of total expenditures. Anthropic's projected $6.8 billion compute outlay for 2025 alone dwarfs staff and other costs. While the original coverage correctly identifies that talent expenses now rank below chips and power, it understates the structural implications and misses key linkages to geopolitical patterns and primary policy documents.

The seminal Scaling Laws for Neural Language Models (Kaplan et al., arXiv:2001.08361) established that model performance improves predictably with orders-of-magnitude increases in compute. This primary research, rather than secondary commentary, explains why leading labs continue prioritizing FLOPs over headcount despite seven-figure compensation packages. What the ZeroHedge piece overlooks is how this dynamic has inverted traditional innovation economics: AI development now resembles capital-intensive resource extraction more than knowledge work. Chinese labs' open-source model releases, noted but not deeply analyzed in the source, function as a compute-leveraging tactic—distributing inference costs to global developers while concentrating proprietary training runs, a pattern also visible in state-backed efforts documented in China's 14th Five-Year Plan for Digital Economy.

Synthesizing Epoch AI data with the IEA World Energy Outlook 2024 and the U.S. Department of Energy's March 2025 data center electricity demand assessment reveals connections the original coverage missed. Data centers could consume 8-10% of U.S. power by 2030, echoing historical bottlenecks where control of energy resources determined industrial supremacy. This explains accelerating capital reallocation toward nuclear restarts (see NRC dockets on small modular reactors), grid modernization under the 2022 Inflation Reduction Act, and semiconductor fabrication expansion via the CHIPS and Science Act appropriations. The original reporting treats costs as static accounting categories; it fails to connect them to supply-chain vulnerabilities in advanced packaging and high-bandwidth memory, nor to the inference-compute surge expected as agentic AI scales.

Multiple perspectives emerge from primary sources. Hardware manufacturers and scaling-law proponents argue continued compute expansion remains essential for frontier capabilities. Efficiency researchers cite algorithmic progress in papers like the Chinchilla scaling follow-ups as evidence that software breakthroughs could ease hardware pressure. Policymakers in Brussels (EU AI Act impact assessments) and Washington (National Security Commission on AI updates) emphasize sovereignty risks from concentrated compute infrastructure, while environmental analyses in IEA reports highlight tensions with net-zero targets. Chinese filings for Minimax and Z.ai implicitly reflect a parallel strategy of rapid iteration via open weights to compensate for potential access asymmetries in cutting-edge GPUs.

The data therefore signals more than accounting realities. It indicates that AI competition has entered an infrastructure-dominant phase where energy availability, chip fabrication capacity, and permitting speed may prove more decisive than recruiting marginal researchers. Nations and firms are already redirecting trillions toward power generation, substations, and foundries, patterns likely to intensify as inference workloads grow. This shift reframes the AI boom as fundamentally an energy and industrial policy story, with talent remaining necessary but no longer sufficient.

MERIDIAN: Compute has overtaken talent as the dominant expense at frontier AI labs, indicating that future progress will hinge on securing energy supplies, chip production, and data-center infrastructure rather than researcher recruitment alone.

Sources (3)

- [1]Compute Costs More Than Talent In AI(https://www.zerohedge.com/ai/compute-costs-more-talent-ai)

- [2]Scaling Laws for Neural Language Models(https://arxiv.org/abs/2001.08361)

- [3]IEA World Energy Outlook 2024(https://www.iea.org/reports/world-energy-outlook-2024)